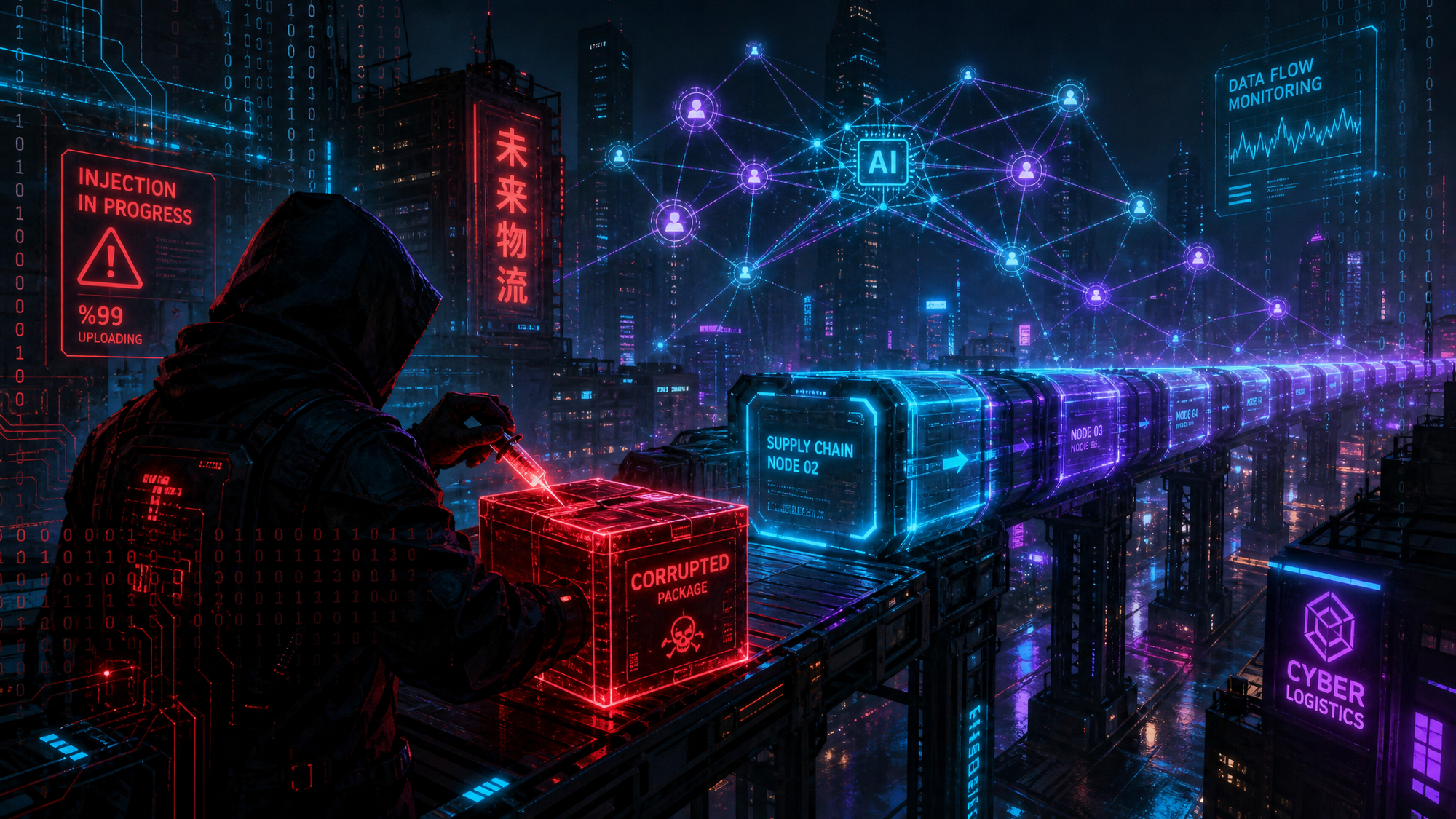

The AI Supply Chain Just Got Breached -- And Your Agents Are the Attack Surface

The Hugging Face and ClawHub breach exposed 575+ malicious AI skills. Here's why the AI supply chain is now a first-class attack surface -- and how Prisma AIRS addresses it.

On 8 May 2026, security researchers confirmed simultaneous breaches of two major AI hub platforms -- Hugging Face and ClawHub. More than 575 malicious 'skills' and model packages had been injected into public repositories from just a handful of compromised accounts. The payload? Trojans and cryptocurrency miners, delivered silently through AI tools that developers and enterprises trusted implicitly.

This isn't a footnote in the threat landscape. It's a signal that the AI supply chain is now a first-class attack surface -- and most organisations aren't ready for it.

This Isn't a Software Supply Chain Attack. It's Worse.

The software supply chain attack playbook is familiar: compromise a build tool, inject a malicious dependency, watch it propagate. Defenders have gotten better at this. SBOMs, dependency pinning, code signing -- the tooling exists.

AI supply chain attacks are different in three important ways:

- The payload is a behaviour, not a binary. A malicious model or skill doesn't just run code -- it influences decisions, generates outputs, and acts autonomously inside workflows.

- Trust is architectural. Agentic AI pipelines are built around pulling capabilities from registries like Hugging Face and ClawHub. The trust is baked in. There's no equivalent of 'don't run untrusted executables.'

- Detection is hard. A malicious Python package doing something unexpected throws alerts. A model that subtly steers outputs, exfiltrates context, or triggers a miner on first execution? That requires AI-native runtime visibility -- not traditional EDR.

The Attacker's Logic

Think about the blast radius here. Hugging Face hosts millions of models used by developers, researchers, and enterprises worldwide. ClawHub is a registry for AI agent skills -- capabilities that get pulled directly into running agentic pipelines. Compromising these platforms isn't about targeting one organisation. It's about poisoning infrastructure that thousands of downstream pipelines implicitly trust.

The 575 malicious skills injected in this breach were designed to look legitimate. They performed their advertised function while running trojans and miners in the background. Some were likely designed with longer-term objectives -- establishing persistence inside agentic workflows, exfiltrating conversation context, or waiting for a trigger condition.

This is a sophisticated play on the shift toward Agentic AI. The attackers aren't just targeting your endpoints. They're targeting the intelligence your agents rely on.

What Prisma AIRS Does About It

Palo Alto Networks' Prisma AIRS (AI Runtime Security) is purpose-built for exactly this threat model. It addresses AI security across the full lifecycle -- not just at the perimeter.

- AI model and skill scanning. Prisma AIRS can analyse models and AI components for malicious content, backdoors, and unexpected behaviours before they're deployed into production pipelines.

- Runtime policy enforcement. Once running, AI applications are monitored for anomalous behaviour -- prompt injection attempts, unexpected data access, outputs that deviate from expected patterns.

- Supply chain integrity. Provenance tracking for AI components ensures your pipelines are pulling from validated, trusted sources -- not quietly redirected to compromised registries.

- Prompt injection and jailbreak detection. AI-native threat detection that understands how models can be manipulated, not just how executables behave.

Traditional security tools weren't designed to reason about model behaviour, skill provenance, or agentic pipeline risk. Prisma AIRS was.

The Uncomfortable Question

If your organisation is deploying AI agents -- and most are, either officially or through shadow IT -- ask yourself: what's the security posture of the skills and models those agents are using?

Are they coming from validated, monitored sources? Is there runtime visibility into what those agents are actually doing? Is there a policy layer that can detect and block anomalous AI behaviour before it causes damage?

If the answer is 'we're not sure,' the Hugging Face and ClawHub breach just gave you a very clear picture of what the risk looks like in practice.

The Bottom Line

The AI supply chain is the new software supply chain -- except the payloads are harder to detect, the trust is deeper, and the blast radius is wider. This breach won't be the last.

AI-native runtime security isn't optional anymore. It's table stakes for any organisation running agentic AI in production. Prisma AIRS is built for this moment -- and this week's breach is exactly why.